Achieving Top Network Performance - Red Hat Summit

Achieving Top Network Performance - Red Hat Summit Achieving Top Network Performance - Red Hat Summit

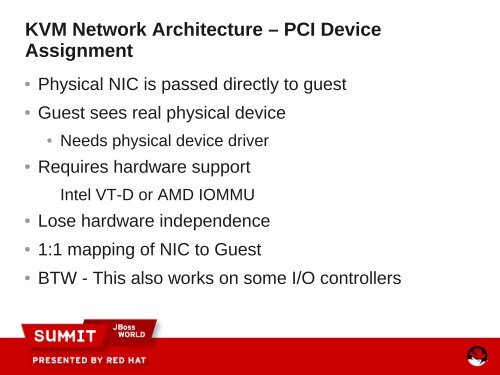

KVM Network Architecture – PCI DeviceAssignment●●●●●●Physical NIC is passed directly to guestGuest sees real physical device●Needs physical device driverRequires hardware supportIntel VT-D or AMD IOMMULose hardware independence1:1 mapping of NIC to GuestBTW - This also works on some I/O controllers

KVM Network Architecture – Device AssignmentDevice Assignment

- Page 2 and 3: Achieving Top NetworkPerformanceMar

- Page 4 and 5: Take Aways●●●Awareness of the

- Page 6 and 7: Some Quick Disclaimers●●●We d

- Page 8 and 9: Agenda● Why Bother ?●40 gbit, g

- Page 10 and 11: Teaser 1 - Glustereffect of net.cor

- Page 12 and 13: Teaser 3 - latency● Font size 28

- Page 14 and 15: Memory Characteristics●●●Memo

- Page 16 and 17: “Issues” that NUMA makes visibl

- Page 18 and 19: NUMA - Latency[root@perf ~]# numact

- Page 20 and 21: PCI Bus - and related issues●●

- Page 22 and 23: 40 Gbit Gen3 vs 10 Gbit PCI Gen2 la

- Page 24 and 25: CPU Characteristics - Basics●●

- Page 26 and 27: CPU - Performance Governors●echo

- Page 28 and 29: CSTATE default - C7 on this configp

- Page 30 and 31: NPtcp latency vs cstates - c7 vs c0

- Page 32 and 33: RHEL6 “tuned-adm” profiles# tun

- Page 34 and 35: Kernel Bypass Technologies - Pros a

- Page 36 and 37: Offload - Solarflare OpenOnloadAver

- Page 38 and 39: KVM Network ArchitectureVirtioConte

- Page 40 and 41: KVM Network Architecture - vhost_ne

- Page 42 and 43: Latency comparison - RHEL 6Network

- Page 44 and 45: Host CPU Consumption virtio vs vhos

- Page 48 and 49: KVM Network Architecture - SR-IOV

- Page 50 and 51: KVM Architecture - Device Assignmen

- Page 52 and 53: RHEL6 - new features●●●●●

- Page 54 and 55: RHEL6 - new features●●Add getso

- Page 56 and 57: Receive Steering - improved message

- Page 58 and 59: Tuning Knobs - Overview●●●By

- Page 60 and 61: sysctl - View and set /proc/sys set

- Page 62 and 63: sysctl - TCP related settings●TCP

- Page 64 and 65: Why Bother ? - Teaser 1effect of ne

- Page 67 and 68: lspci - details# lspci -vvvs 81:00.

- Page 69 and 70: Why Bother - A quick teaser● ifco

- Page 71 and 72: Tuning- first pass bottleneck resol

- Page 73 and 74: Tuning - second pass setup●●●

- Page 75 and 76: Tuning - irqbalance disabled, netpe

- Page 77 and 78: Tuning - second pass●mpstat on th

- Page 79 and 80: Tuning - step 3●Try TCP_SENDFILE#

- Page 81 and 82: Tuning - checking ethtool -S eth4

- Page 83 and 84: Tuning - step 4●More buffers# ./n

- Page 85 and 86: Tuning - sanity check●Sometimes m

- Page 87 and 88: Throttling - cgroups in Action

- Page 89 and 90: Cgroup default mount points# cat /e

- Page 91 and 92: cgroups[root@dhcp1001950 ~]#

- Page 93 and 94: incorrect bindings