aligned end characters (similar to the “rules” extracted by [Mulloni and Pekar, 2006]). Inthe above example, su$-ss$ is a mismatch with “s” and “$” as the aligned end characters.Two sets of features are taken from each mismatch, one that includes the beginning/endingaligned characters as context and one that does not. For example, <strong>for</strong> the endings of theFrench/English pair (économique,economic), we include both the substring pairs ique$:ic$and que:c as features.One consideration is whether substring features should be binary presence/absence, orthe count of the feature in the pair normalized by the length of the longer word. We investigateboth of these approaches in our experiments. Also, there is no reason not to include thescores of baseline approaches like NED, LCSR, PREFIX or DICE as features in the representationas well. Features like the lengths of the two words and the difference in lengthsof the words have also proved to be useful in preliminary experiments. Semantic featureslike frequency similarity or contextual similarity might also be included to help determinecognation between words that are not present in a translation lexicon or bitext.7.5 ExperimentsSection 7.3 introduced two high-precision methods <strong>for</strong> generating labeled cognate pairs:using the word alignments from a bilingual corpus or using the entries in a translation lexicon.We investigate both of these methods in our experiments. In each case, we generatesets of labeled word pairs <strong>for</strong> training, testing, and development. The proportion of positiveexamples in the bitext-labeled test sets range between 1.4% and 1.8%, while rangingbetween 1.0% and 1.6% <strong>for</strong> the dictionary data. 2For the discriminative methods, we use a popular support vector machine (SVM) learningpackage called SVM light [Joachims, 1999a]. As Chapter 2 describes, SVMs are maximummarginclassifiers that achieve good per<strong>for</strong>mance on a range of tasks. In each case, we learna linear kernel on the training set pairs and tune the parameter that trades-off training errorand margin on the development set. We apply our classifier to the test set and score the pairsby their positive distance from the SVM classification hyperplane (also done by [Bilenkoand Mooney, 2003] with their token-based SVM similarity measure).We also score the test sets using traditional orthographic similarity measures PREFIX,DICE, LCSR, and NED, an average of these four, and [Kondrak, 2005]’s LCSF. We alsouse the log of the edit probability from the stochastic decoder of [Ristad and Yianilos, 1998](normalized by the length of the longer word) and [Tiedemann, 1999]’s highest per<strong>for</strong>mingsystem (Approach #3). Both use only the positive examples in our training set. Our evaluationmetric is 11-pt average precision on the score-sorted pair lists (also used by [Kondrakand Sherif, 2006]).7.5.1 Bitext ExperimentsFor the bitext-based annotation, we use publicly-available word alignments from the Europarlcorpus, automatically generated by GIZA++ <strong>for</strong> French-English (Fr), Spanish-English(Es) and German-English (De) [Koehn, 2005; Koehn and Monz, 2006]. Initial cleaning ofthese noisy word pairs is necessary. We thus remove all pairs with numbers, punctuation,a capitalized English word, and all words that occur fewer than ten times. We also remove2 The cognate data sets used in our experiments are available at http://www.cs.ualberta.ca/˜bergsma/Cognates/101

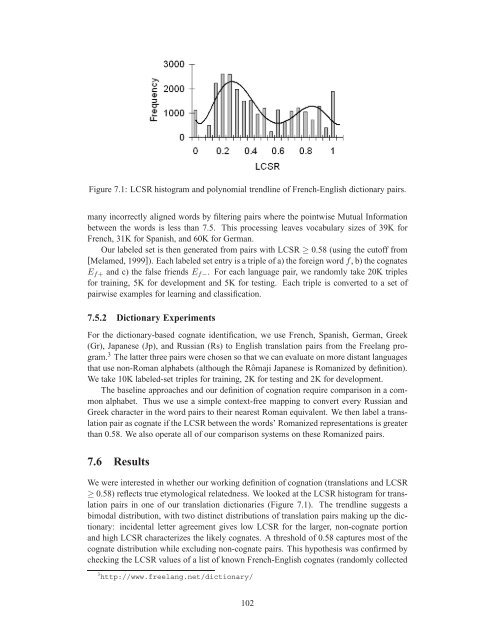

Figure 7.1: LCSR histogram and polynomial trendline of French-English dictionary pairs.many incorrectly aligned words by filtering pairs where the pointwise Mutual In<strong>for</strong>mationbetween the words is less than 7.5. This processing leaves vocabulary sizes of 39K <strong>for</strong>French, 31K <strong>for</strong> Spanish, and 60K <strong>for</strong> German.Our labeled set is then generated from pairs with LCSR ≥ 0.58 (using the cutoff from[Melamed, 1999]). Each labeled set entry is a triple of a) the <strong>for</strong>eign wordf, b) the cognatesE f+ and c) the false friends E f− . For each language pair, we randomly take 20K triples<strong>for</strong> training, 5K <strong>for</strong> development and 5K <strong>for</strong> testing. Each triple is converted to a set ofpairwise examples <strong>for</strong> learning and classification.7.5.2 Dictionary ExperimentsFor the dictionary-based cognate identification, we use French, Spanish, German, Greek(Gr), Japanese (Jp), and Russian (Rs) to English translation pairs from the Freelang program.3 The latter three pairs were chosen so that we can evaluate on more distant languagesthat use non-Roman alphabets (although the Rômaji Japanese is Romanized by definition).We take 10K labeled-set triples <strong>for</strong> training, 2K <strong>for</strong> testing and 2K <strong>for</strong> development.The baseline approaches and our definition of cognation require comparison in a commonalphabet. Thus we use a simple context-free mapping to convert every Russian andGreek character in the word pairs to their nearest Roman equivalent. We then label a translationpair as cognate if the LCSR between the words’ Romanized representations is greaterthan 0.58. We also operate all of our comparison systems on these Romanized pairs.7.6 ResultsWe were interested in whether our working definition of cognation (translations and LCSR≥ 0.58) reflects true etymological relatedness. We looked at the LCSR histogram <strong>for</strong> translationpairs in one of our translation dictionaries (Figure 7.1). The trendline suggests abimodal distribution, with two distinct distributions of translation pairs making up the dictionary:incidental letter agreement gives low LCSR <strong>for</strong> the larger, non-cognate portionand high LCSR characterizes the likely cognates. A threshold of 0.58 captures most of thecognate distribution while excluding non-cognate pairs. This hypothesis was confirmed bychecking the LCSR values of a list of known French-English cognates (randomly collected3 http://www.freelang.net/dictionary/102

- Page 1 and 2:

University of AlbertaLarge-Scale Se

- Page 5 and 6:

Table of Contents1 Introduction 11.

- Page 7 and 8:

7 Alignment-Based Discriminative St

- Page 9 and 10:

List of Figures2.1 The linear class

- Page 11 and 12:

drawn in by establishing a partial

- Page 13 and 14:

(2) “He saw the trophy won yester

- Page 15 and 16:

actual sentence said, “My son’s

- Page 17 and 18:

Uses Web-Scale N-grams Auto-Creates

- Page 19 and 20:

spelling correction, and the identi

- Page 21 and 22:

Chapter 2Supervised and Semi-Superv

- Page 23 and 24:

emphasis on “deliverables and eva

- Page 25 and 26:

Figure 2.1: The linear classifier h

- Page 27 and 28:

The above experimental set-up is so

- Page 29 and 30:

and discriminative models therefore

- Page 31 and 32:

their slack value). In practice, I

- Page 33 and 34:

One way to find a better solution i

- Page 35 and 36:

Figure 2.2: Learning from labeled a

- Page 37 and 38:

algorithm). Yarowsky used it for wo

- Page 39 and 40:

Learning with Natural Automatic Exa

- Page 41 and 42:

positive examples from any collecti

- Page 43 and 44:

generated word clusters. Several re

- Page 45 and 46:

One common disambiguation task is t

- Page 47 and 48:

3.2.2 Web-Scale Statistics in NLPEx

- Page 49 and 50:

For each target wordv 0 , there are

- Page 51 and 52:

ut without counts for the class pri

- Page 53 and 54:

Accuracy (%)10090807060SUPERLMSUMLM

- Page 55 and 56:

We also follow Carlson et al. [2001

- Page 57 and 58:

Set BASE [Golding and Roth, 1999] T

- Page 59 and 60: pronoun (#3) guarantees that at the

- Page 61 and 62: 807876F-Score747270Stemmed patterns

- Page 63 and 64: anaphoricity by [Denis and Baldridg

- Page 65 and 66: ter, we present a simple technique

- Page 67 and 68: We seek weights such that the class

- Page 69 and 70: each optimum performance is at most

- Page 71 and 72: We now show that ¯w T (diag(¯p)

- Page 73 and 74: Training ExamplesSystem 10 100 1K 1

- Page 75 and 76: Since we wanted the system to learn

- Page 77 and 78: Chapter 5Creating Robust Supervised

- Page 79 and 80: § In-Domain (IN) Out-of-Domain #1

- Page 81 and 82: Adjective ordering is also needed i

- Page 83 and 84: Accuracy (%)10095908580757065601001

- Page 85 and 86: System IN O1 O2Baseline 66.9 44.6 6

- Page 87 and 88: 90% of the time in Gutenberg. The L

- Page 89 and 90: VBN/VBD distinction by providing re

- Page 91 and 92: other tasks we only had a handful o

- Page 93 and 94: without the need for manual annotat

- Page 95 and 96: DSP uses these labels to identify o

- Page 97 and 98: Semantic classesMotivated by previo

- Page 99 and 100: empirical Pr(n|v) in Equation (6.2)

- Page 101 and 102: Verb Plaus./Implaus. Resnik Dagan e

- Page 103 and 104: SystemAccMost-Recent Noun 17.9%Maxi

- Page 105 and 106: Chapter 7Alignment-Based Discrimina

- Page 107 and 108: ious measures to learn the recurren

- Page 109: how labeled word pairs can be colle

- Page 113 and 114: 0.711-pt Average Precision0.60.50.4

- Page 115 and 116: Fr-En Bitext Es-En Bitext De-En Bit

- Page 117 and 118: Chapter 8Conclusions and Future Wor

- Page 119 and 120: 8.3 Future WorkThis section outline

- Page 121 and 122: My focus is thus on enabling robust

- Page 123 and 124: [Bergsma and Cherry, 2010] Shane Be

- Page 125 and 126: [Church and Mercer, 1993] Kenneth W

- Page 127 and 128: [Grefenstette, 1999] Gregory Grefen

- Page 129 and 130: [Koehn, 2005] Philipp Koehn. Europa

- Page 131 and 132: [Mihalcea and Moldovan, 1999] Rada

- Page 133 and 134: [Ristad and Yianilos, 1998] Eric Sv

- Page 135 and 136: [Wang et al., 2008] Qin Iris Wang,

- Page 137: NNP noun, proper, singular Motown V