Large scale and hybrid computing with CP2K - Prace Training Portal

Large scale and hybrid computing with CP2K - Prace Training Portal Large scale and hybrid computing with CP2K - Prace Training Portal

GPU Kernel DescriptionCompute a batch Cij, Aik, Bkj of small matrix block products (5x5 .. 23x23)Not too different from batched dgemm (Ci=Ci+Ai*Bi) available in cudablas 4.1CUBLAS Batched dgemm reachesOnly ~50 Gflops for 23x23Need to:1) exploit dependencies(data reuse)2) Better compute kernelSort batch on Cij so we have only 2 data read/writes instead of 4.Hand-optimize a CUDA kernel for size 23x23 (playing with registers / occupation etc)

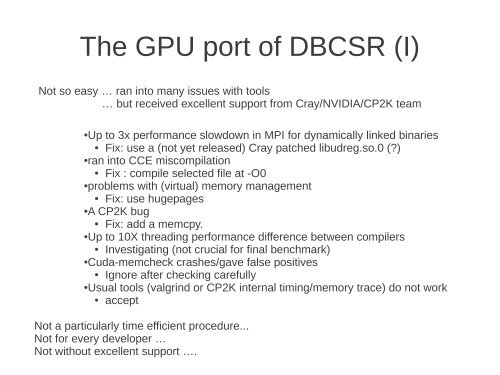

The GPU port of DBCSR (I)Not so easy … ran into many issues with tools… but received excellent support from Cray/NVIDIA/CP2K team● Up to 3x performance slowdown in MPI for dynamically linked binaries● Fix: use a (not yet released) Cray patched libudreg.so.0 (?)● ran into CCE miscompilation● Fix : compile selected file at -O0● problems with (virtual) memory management● Fix: use hugepages● A CP2K bug● Fix: add a memcpy.● Up to 10X threading performance difference between compilers● Investigating (not crucial for final benchmark)● Cuda-memcheck crashes/gave false positives● Ignore after checking carefully● Usual tools (valgrind or CP2K internal timing/memory trace) do not work● acceptNot a particularly time efficient procedure...Not for every developer …Not without excellent support ….

- Page 1 and 2: Large scale and hybrid computing wi

- Page 3 and 4: Modeling complex systemsDensity fun

- Page 5 and 6: What is CP2K ?CP2K is a freely avai

- Page 7 and 8: CP2K: the swiss army knife ofmolecu

- Page 9 and 10: What is CP2K: teamWith contribution

- Page 11 and 12: CP2K: science (I)Electronic structu

- Page 13 and 14: CP2K: science (III)Structure predic

- Page 15 and 16: CP2K: algorithmsThe power & challen

- Page 17 and 18: CP2K on CSCSproduction hardwareFor

- Page 19 and 20: Complex hardware:NUMA nodes with PC

- Page 22 and 23: More accurate theory:From 'GGA' to

- Page 24 and 25: Hartree-Fock exchangeAn easy term i

- Page 26 and 27: O(N) HFX: measurementsLinear scalin

- Page 28 and 29: Many-core era: OMP/MPIMain benefits

- Page 30 and 31: In-core integral compressionAlmost

- Page 32 and 33: Auxiliary Density Matrix Methods(AD

- Page 34 and 35: Møller-Plesset Perturbation Theory

- Page 36 and 37: Parallel efficiencyCO 2crystal (32

- Page 38 and 39: Linear Scaling SCFLargest O(N 3 ) c

- Page 40 and 41: Sign matrix iterationsThe density m

- Page 42 and 43: Towards O(1) :constant walltime wit

- Page 44 and 45: The local multiplicationA two stage

- Page 48 and 49: The GPU port of DBCSR (II)Open Chal

- Page 50 and 51: GPU application benchmark●>400 mu

- Page 52: AcknowledgementsZürichJuerg Hutter

The GPU port of DBCSR (I)Not so easy … ran into many issues <strong>with</strong> tools… but received excellent support from Cray/NVIDIA/<strong>CP2K</strong> team● Up to 3x performance slowdown in MPI for dynamically linked binaries● Fix: use a (not yet released) Cray patched libudreg.so.0 (?)● ran into CCE miscompilation● Fix : compile selected file at -O0● problems <strong>with</strong> (virtual) memory management● Fix: use hugepages● A <strong>CP2K</strong> bug● Fix: add a memcpy.● Up to 10X threading performance difference between compilers● Investigating (not crucial for final benchmark)● Cuda-memcheck crashes/gave false positives● Ignore after checking carefully● Usual tools (valgrind or <strong>CP2K</strong> internal timing/memory trace) do not work● acceptNot a particularly time efficient procedure...Not for every developer …Not <strong>with</strong>out excellent support ….