MACHINE LEARNING TECHNIQUES - LASA

MACHINE LEARNING TECHNIQUES - LASA MACHINE LEARNING TECHNIQUES - LASA

40 While hierarchical algorithms build clusters gradually (as crystals are grown), partitioning algorithms learn clusters directly. In doing so, they either try to discover clusters by iteratively relocating points between subsets, or try to identify clusters as areas highly populated with data. Algorithms of the first kind are surveyed in the section Partitioning Relocation Methods. They are further categorized into k-means methods (different schemes, initialization, optimization, harmonic means, extensions) and probabilistic clustering or density-Based Partitioning (E.g. soft-K-means and Mixture of Gaussians). Such methods concentrate on how well points fit into their clusters and tend to build clusters of proper convex shapes. When reading the following section, keep in mind that the major properties one is concerned with when designing a clustering methods include: • Type of attributes algorithm can handle • Scalability to large datasets • Ability to work with high dimensional data • Ability to find clusters of irregular shape • Handling outliers • Time complexity (when there is no confusion, we use the term complexity) • Data order dependency • Labeling or assignment (hard or strict vs. soft of fuzzy) • Reliance on a priori knowledge and user defined parameters • Interpretability of results 3.1.1 Hierarchical Clustering In hierarchical clustering, the data is partitioned iteratively, by either agglomerating the data or by dividing the data. The result of such an algorithm can be best represented by a dendrogram. Figure 3-2: Dendogram © A.G.Billard 2004 – Last Update March 2011

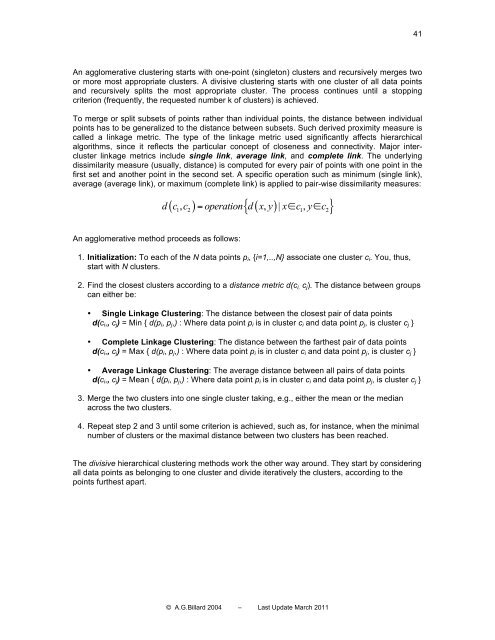

41 An agglomerative clustering starts with one-point (singleton) clusters and recursively merges two or more most appropriate clusters. A divisive clustering starts with one cluster of all data points and recursively splits the most appropriate cluster. The process continues until a stopping criterion (frequently, the requested number k of clusters) is achieved. To merge or split subsets of points rather than individual points, the distance between individual points has to be generalized to the distance between subsets. Such derived proximity measure is called a linkage metric. The type of the linkage metric used significantly affects hierarchical algorithms, since it reflects the particular concept of closeness and connectivity. Major intercluster linkage metrics include single link, average link, and complete link. The underlying dissimilarity measure (usually, distance) is computed for every pair of points with one point in the first set and another point in the second set. A specific operation such as minimum (single link), average (average link), or maximum (complete link) is applied to pair-wise dissimilarity measures: ( ) ( ) { } d c, c = operation d x, y | x∈c, y∈ c 1 2 1 2 An agglomerative method proceeds as follows: 1. Initialization: To each of the N data points p i , {i=1,..,N} associate one cluster c i . You, thus, start with N clusters. 2. Find the closest clusters according to a distance metric d(c i, c j ). The distance between groups can either be: • Single Linkage Clustering: The distance between the closest pair of data points d(c i ,, c j ) = Min { d(p i , p j ,) : Where data point p i is in cluster c i and data point p j , is cluster c j } • Complete Linkage Clustering: The distance between the farthest pair of data points d(c i ,, c j ) = Max { d(p i , p j ,) : Where data point p i is in cluster c i and data point p j , is cluster c j } • Average Linkage Clustering: The average distance between all pairs of data points d(c i ,, c j ) = Mean { d(p i , p j ,) : Where data point p i is in cluster c i and data point p j , is cluster c j } 3. Merge the two clusters into one single cluster taking, e.g., either the mean or the median across the two clusters. 4. Repeat step 2 and 3 until some criterion is achieved, such as, for instance, when the minimal number of clusters or the maximal distance between two clusters has been reached. The divisive hierarchical clustering methods work the other way around. They start by considering all data points as belonging to one cluster and divide iteratively the clusters, according to the points furthest apart. © A.G.Billard 2004 – Last Update March 2011

- Page 1 and 2: SCHOOL OF ENGINEERING MACHINE LEARN

- Page 3 and 4: 3 4. 4 Regression Techniques ......

- Page 5 and 6: 5 9.2.2 Probability Distributions,

- Page 7 and 8: 7 Journals: • Machine Learning

- Page 9 and 10: 9 Performance What would be an opti

- Page 11 and 12: 11 1.2.3 Key features for a good le

- Page 13 and 14: 13 1.3.2 Crossvalidation To ensure

- Page 15 and 16: 15 In particular, we will consider

- Page 17 and 18: 17 2.1 Principal Component Analysis

- Page 19 and 20: 19 ( ) Xʹ′ = W X − µ (2.6) i

- Page 21 and 22: 21 2.1.2.2 Reconstruction error min

- Page 23 and 24: 23 PCA is an example of PP approach

- Page 25 and 26: 25 Algorithm: If one further assume

- Page 27 and 28: 27 The CCA algorithm consists thus

- Page 29 and 30: 29 Figure 2-6: Mixture of variables

- Page 31 and 32: 31 2.3.2 Why Gaussian variables are

- Page 33 and 34: 33 • In our general definition of

- Page 35 and 36: 35 2.3.5 ICA Ambiguities We cannot

- Page 37 and 38: 37 Denote by g the derivative of th

- Page 39: 39 3 Clustering and Classification

- Page 43 and 44: 43 3.1.1.1 The CURE Clustering Algo

- Page 45 and 46: 45 Disadvantages of hierarchical cl

- Page 47 and 48: 47 Cases where K-means might be vie

- Page 49 and 50: 49 3.1.4 Clustering with Mixtures o

- Page 51 and 52: 51 k ( σ j ) 2 = k ∑ i α = r k

- Page 53 and 54: 53 Theα are the so-called mixing c

- Page 55 and 56: 55 Figure 3-16: Clustering with 3 G

- Page 57 and 58: 57 When the transformation A is lin

- Page 59 and 60: 59 C: X → Y ( ) C x K = arg max

- Page 61 and 62: 61 Figure 3-18: Linear combination

- Page 63 and 64: 63 Figure 3-19: Bayes classificatio

- Page 65 and 66: 65 ⎛⎛ min ⎜⎜ w ⎝⎝ N i=

- Page 67 and 68: 67 T ( yi − xi w) 2 M ⎛⎛ ⎞

- Page 69 and 70: 69 Figure 4-2: Illustration of the

- Page 71 and 72: 71 4.4.2 Multi-Gaussian Case It is

- Page 73 and 74: 73 5 Kernel Methods These lecture n

- Page 75 and 76: 75 The kernel k provides a metric o

- Page 77 and 78: 77 M 1 T v = ∑ x ( x ) v M λ i j

- Page 79 and 80: 79 1 M The solutions to the dual ei

- Page 81 and 82: 81 5.4 Kernel CCA The linear versio

- Page 83 and 84: 83 additional ridge parameter induc

- Page 85 and 86: 85 Figure 5-3: TOP: Marginal (left)

- Page 87 and 88: 87 statistical independence. We def

- Page 89 and 90: 89 J j ( µ 1,...., µ K) = ∑∑

41<br />

An agglomerative clustering starts with one-point (singleton) clusters and recursively merges two<br />

or more most appropriate clusters. A divisive clustering starts with one cluster of all data points<br />

and recursively splits the most appropriate cluster. The process continues until a stopping<br />

criterion (frequently, the requested number k of clusters) is achieved.<br />

To merge or split subsets of points rather than individual points, the distance between individual<br />

points has to be generalized to the distance between subsets. Such derived proximity measure is<br />

called a linkage metric. The type of the linkage metric used significantly affects hierarchical<br />

algorithms, since it reflects the particular concept of closeness and connectivity. Major intercluster<br />

linkage metrics include single link, average link, and complete link. The underlying<br />

dissimilarity measure (usually, distance) is computed for every pair of points with one point in the<br />

first set and another point in the second set. A specific operation such as minimum (single link),<br />

average (average link), or maximum (complete link) is applied to pair-wise dissimilarity measures:<br />

( ) ( )<br />

{ }<br />

d c, c = operation d x, y | x∈c,<br />

y∈<br />

c<br />

1 2 1 2<br />

An agglomerative method proceeds as follows:<br />

1. Initialization: To each of the N data points p i , {i=1,..,N} associate one cluster c i . You, thus,<br />

start with N clusters.<br />

2. Find the closest clusters according to a distance metric d(c i, c j ). The distance between groups<br />

can either be:<br />

• Single Linkage Clustering: The distance between the closest pair of data points<br />

d(c i ,, c j ) = Min { d(p i , p j ,) : Where data point p i is in cluster c i and data point p j , is cluster c j }<br />

• Complete Linkage Clustering: The distance between the farthest pair of data points<br />

d(c i ,, c j ) = Max { d(p i , p j ,) : Where data point p i is in cluster c i and data point p j , is cluster c j }<br />

• Average Linkage Clustering: The average distance between all pairs of data points<br />

d(c i ,, c j ) = Mean { d(p i , p j ,) : Where data point p i is in cluster c i and data point p j , is cluster c j }<br />

3. Merge the two clusters into one single cluster taking, e.g., either the mean or the median<br />

across the two clusters.<br />

4. Repeat step 2 and 3 until some criterion is achieved, such as, for instance, when the minimal<br />

number of clusters or the maximal distance between two clusters has been reached.<br />

The divisive hierarchical clustering methods work the other way around. They start by considering<br />

all data points as belonging to one cluster and divide iteratively the clusters, according to the<br />

points furthest apart.<br />

© A.G.Billard 2004 – Last Update March 2011