Lecture 18 Subgradients

Lecture 18 Subgradients

Lecture 18 Subgradients

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

<strong>Lecture</strong> <strong>18</strong><br />

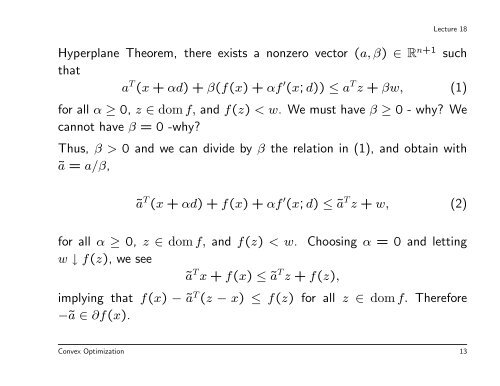

Hyperplane Theorem, there exists a nonzero vector (a, β) ∈ R n+1 such<br />

that<br />

a T (x + αd) + β(f(x) + αf ′ (x; d)) ≤ a T z + βw, (1)<br />

for all α ≥ 0, z ∈ dom f, and f(z) < w. We must have β ≥ 0 - why? We<br />

cannot have β = 0 -why?<br />

Thus, β > 0 and we can divide by β the relation in (1), and obtain with<br />

ã = a/β,<br />

ã T (x + αd) + f(x) + αf ′ (x; d) ≤ ã T z + w, (2)<br />

for all α ≥ 0, z ∈ dom f, and f(z) < w. Choosing α = 0 and letting<br />

w ↓ f(z), we see<br />

ã T x + f(x) ≤ ã T z + f(z),<br />

implying that f(x) − ã T (z − x) ≤ f(z) for all z ∈ dom f. Therefore<br />

−ã ∈ ∂f(x).<br />

Convex Optimization 13