Documentation of the Evaluation of CALPUFF and Other Long ...

Documentation of the Evaluation of CALPUFF and Other Long ... Documentation of the Evaluation of CALPUFF and Other Long ...

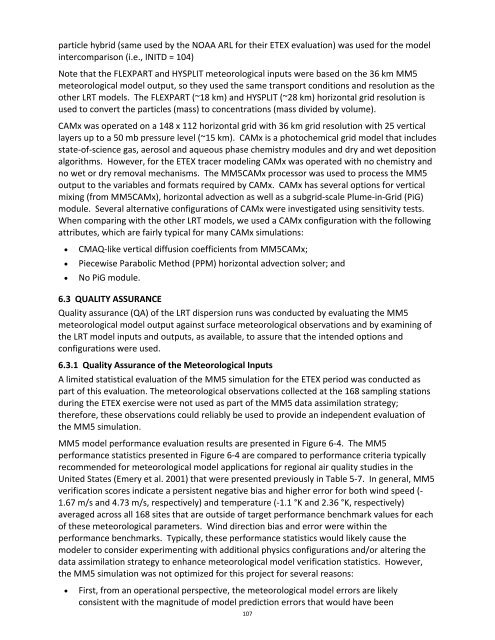

particle hybrid (same used by the NOAA ARL for their ETEX evaluation) was used for the model intercomparison (i.e., INITD = 104) Note that the FLEXPART and HYSPLIT meteorological inputs were based on the 36 km MM5 meteorological model output, so they used the same transport conditions and resolution as the other LRT models. The FLEXPART (~18 km) and HYSPLIT (~28 km) horizontal grid resolution is used to convert the particles (mass) to concentrations (mass divided by volume). CAMx was operated on a 148 x 112 horizontal grid with 36 km grid resolution with 25 vertical layers up to a 50 mb pressure level (~15 km). CAMx is a photochemical grid model that includes state‐of‐science gas, aerosol and aqueous phase chemistry modules and dry and wet deposition algorithms. However, for the ETEX tracer modeling CAMx was operated with no chemistry and no wet or dry removal mechanisms. The MM5CAMx processor was used to process the MM5 output to the variables and formats required by CAMx. CAMx has several options for vertical mixing (from MM5CAMx), horizontal advection as well as a subgrid‐scale Plume‐in‐Grid (PiG) module. Several alternative configurations of CAMx were investigated using sensitivity tests. When comparing with the other LRT models, we used a CAMx configuration with the following attributes, which are fairly typical for many CAMx simulations: • CMAQ‐like vertical diffusion coefficients from MM5CAMx; • Piecewise Parabolic Method (PPM) horizontal advection solver; and • No PiG module. 6.3 QUALITY ASSURANCE Quality assurance (QA) of the LRT dispersion runs was conducted by evaluating the MM5 meteorological model output against surface meteorological observations and by examining of the LRT model inputs and outputs, as available, to assure that the intended options and configurations were used. 6.3.1 Quality Assurance of the Meteorological Inputs A limited statistical evaluation of the MM5 simulation for the ETEX period was conducted as part of this evaluation. The meteorological observations collected at the 168 sampling stations during the ETEX exercise were not used as part of the MM5 data assimilation strategy; therefore, these observations could reliably be used to provide an independent evaluation of the MM5 simulation. MM5 model performance evaluation results are presented in Figure 6‐4. The MM5 performance statistics presented in Figure 6‐4 are compared to performance criteria typically recommended for meteorological model applications for regional air quality studies in the United States (Emery et al. 2001) that were presented previously in Table 5‐7. In general, MM5 verification scores indicate a persistent negative bias and higher error for both wind speed (‐ 1.67 m/s and 4.73 m/s, respectively) and temperature (‐1.1 °K and 2.36 °K, respectively) averaged across all 168 sites that are outside of target performance benchmark values for each of these meteorological parameters. Wind direction bias and error were within the performance benchmarks. Typically, these performance statistics would likely cause the modeler to consider experimenting with additional physics configurations and/or altering the data assimilation strategy to enhance meteorological model verification statistics. However, the MM5 simulation was not optimized for this project for several reasons: • First, from an operational perspective, the meteorological model errors are likely consistent with the magnitude of model prediction errors that would have been 107

experienced during the original ETEX exercise if forecast fields rather ECMWF analysis fields had been employed. Additionally, the MM5 simulation has the added advantage of data assimilation to constrain the growth of forecast error as a function of time. • Second, since each of the five LRT model platforms evaluated in this project are presented with the same meteorological database; a systemic degradation of performance due to advection error would have been observed if the meteorology was a primary source of model error. However, since poor model performance was only noted in one of the five models, meteorological error was not considered the primary cause of poor performance. • Finally, since wind direction is likely one of the key meteorological parameters for LRT simulations, the operational decision to use the existing MM5 forecasts was made because the MM5 wind direction forecasts were within acceptable statistical limits. 2 1 0 ‐1 ‐2 6 5 4 3 2 1 0 23‐Oct 23‐Oct 24‐Oct 24‐Oct WS Bias (m/s) 25‐Oct Figure 6‐4a. ETEX MM5 model performance statistics of Bias (top) and RMSE (bottom) for wind speed and comparison with benchmarks (purple lines). 108 26‐Oct WS RMSE (m/s) 25‐Oct 26‐Oct 27‐Oct 27‐Oct Avg Avg

- Page 93 and 94: Figure 4‐1. CALPUFF/CALMET UTM mo

- Page 95 and 96: compact discs, which were used to o

- Page 97 and 98: Table 4‐4. CALPUFF parameters use

- Page 99 and 100: Table 4‐8. CALPUFF/MMIF sensitivi

- Page 101 and 102: the fitted Gaussian plume is not a

- Page 103 and 104: Figure 4‐2. Comparison of predict

- Page 105 and 106: Figure 5‐1. Location of Dayton an

- Page 107 and 108: MM5 runs, the first without FDDA (i

- Page 109 and 110: Table 5‐3. MM5 sensitivity tests

- Page 111 and 112: Table 5‐6. Definition of the CALM

- Page 113 and 114: performance at the monitor location

- Page 115 and 116: 35% 30% 25% 20% 15% 10% 5% 0% 35% 3

- Page 117 and 118: 40% 35% 30% 25% 20% 15% 10% 5% 0% F

- Page 119 and 120: 40% 35% 30% 25% 20% 15% 10% 5% 0% E

- Page 121 and 122: 5.4.1.4 Comparison of CALPUFF CTEX3

- Page 123 and 124: 0.48 0.36 0.24 0.12 0 ‐0.12 16% 1

- Page 125 and 126: CTEX3 discussed in Section 5.4.1. A

- Page 127 and 128: CALPUFF sensitivity simulations are

- Page 129 and 130: 14. Across all the spatial statisti

- Page 131 and 132: sensitivity tests. The “B” seri

- Page 133 and 134: ‐0.1 ‐0.2 0 0.8 0.7 0.6 0.5 0.4

- Page 135 and 136: 6.0 1994 EUROPEAN TRACER EXPERIMENT

- Page 137 and 138: Figure 6‐2a. Surface synoptic met

- Page 139 and 140: Figure 6‐3a. Distribution of the

- Page 141 and 142: 36 kilometers and the vertical stru

- Page 143: splitting flag near sunset (hour 17

- Page 147 and 148: 2 1 0 ‐1 ‐2 3 2 1 0 23‐Oct 23

- Page 149 and 150: 70% 60% 50% 40% 30% 20% 10% 0% Figu

- Page 151 and 152: Figure 6‐9. Factor of Exceedance

- Page 153 and 154: eceiving a 0.0 score. Figure 6‐13

- Page 155 and 156: Table 6‐1. Summary of model ranki

- Page 157 and 158: plume spread and observed surface c

- Page 159 and 160: Figure 6‐16c. Comparison of spati

- Page 161 and 162: • NoPiG: The tracer emissions wer

- Page 163 and 164: Using the NMSE statistical performa

- Page 165 and 166: 6.4.3.2 Effect of PiG on Model Perf

- Page 167 and 168: 80 70 60 50 40 30 20 10 0 1 2 3 4 5

- Page 169 and 170: Table 6‐3. Summary of CALPUFF puf

- Page 171 and 172: 0.2 0.18 0.16 0.14 0.12 0.1 0.08 0.

- Page 173 and 174: Figure 6‐ ‐22 displays the t sp

- Page 175 and 176: Figure 6‐ ‐23a. Global model pe

- Page 177 and 178: Figure 6‐24. Figure of Merit (FMS

- Page 179 and 180: 7.0 REFERENCES Anderson, B. 2008. T

- Page 181 and 182: EPA, 1984: Interim Procedures for E

- Page 183 and 184: Mlawer, E.J., S.J. Taubman, P.D. Br

- Page 185 and 186: 148 Appendix A Evaluation of the MM

- Page 187 and 188: Table A‐1. Wind speed and wind di

- Page 189 and 190: Table A‐3. Definition of the CTEX

- Page 191 and 192: Figure A‐ ‐1. Wind speed bias (

- Page 193 and 194: Figure A‐ ‐3. Humidity bias and

particle hybrid (same used by <strong>the</strong> NOAA ARL for <strong>the</strong>ir ETEX evaluation) was used for <strong>the</strong> model<br />

intercomparison (i.e., INITD = 104)<br />

Note that <strong>the</strong> FLEXPART <strong>and</strong> HYSPLIT meteorological inputs were based on <strong>the</strong> 36 km MM5<br />

meteorological model output, so <strong>the</strong>y used <strong>the</strong> same transport conditions <strong>and</strong> resolution as <strong>the</strong><br />

o<strong>the</strong>r LRT models. The FLEXPART (~18 km) <strong>and</strong> HYSPLIT (~28 km) horizontal grid resolution is<br />

used to convert <strong>the</strong> particles (mass) to concentrations (mass divided by volume).<br />

CAMx was operated on a 148 x 112 horizontal grid with 36 km grid resolution with 25 vertical<br />

layers up to a 50 mb pressure level (~15 km). CAMx is a photochemical grid model that includes<br />

state‐<strong>of</strong>‐science gas, aerosol <strong>and</strong> aqueous phase chemistry modules <strong>and</strong> dry <strong>and</strong> wet deposition<br />

algorithms. However, for <strong>the</strong> ETEX tracer modeling CAMx was operated with no chemistry <strong>and</strong><br />

no wet or dry removal mechanisms. The MM5CAMx processor was used to process <strong>the</strong> MM5<br />

output to <strong>the</strong> variables <strong>and</strong> formats required by CAMx. CAMx has several options for vertical<br />

mixing (from MM5CAMx), horizontal advection as well as a subgrid‐scale Plume‐in‐Grid (PiG)<br />

module. Several alternative configurations <strong>of</strong> CAMx were investigated using sensitivity tests.<br />

When comparing with <strong>the</strong> o<strong>the</strong>r LRT models, we used a CAMx configuration with <strong>the</strong> following<br />

attributes, which are fairly typical for many CAMx simulations:<br />

• CMAQ‐like vertical diffusion coefficients from MM5CAMx;<br />

• Piecewise Parabolic Method (PPM) horizontal advection solver; <strong>and</strong><br />

• No PiG module.<br />

6.3 QUALITY ASSURANCE<br />

Quality assurance (QA) <strong>of</strong> <strong>the</strong> LRT dispersion runs was conducted by evaluating <strong>the</strong> MM5<br />

meteorological model output against surface meteorological observations <strong>and</strong> by examining <strong>of</strong><br />

<strong>the</strong> LRT model inputs <strong>and</strong> outputs, as available, to assure that <strong>the</strong> intended options <strong>and</strong><br />

configurations were used.<br />

6.3.1 Quality Assurance <strong>of</strong> <strong>the</strong> Meteorological Inputs<br />

A limited statistical evaluation <strong>of</strong> <strong>the</strong> MM5 simulation for <strong>the</strong> ETEX period was conducted as<br />

part <strong>of</strong> this evaluation. The meteorological observations collected at <strong>the</strong> 168 sampling stations<br />

during <strong>the</strong> ETEX exercise were not used as part <strong>of</strong> <strong>the</strong> MM5 data assimilation strategy;<br />

<strong>the</strong>refore, <strong>the</strong>se observations could reliably be used to provide an independent evaluation <strong>of</strong><br />

<strong>the</strong> MM5 simulation.<br />

MM5 model performance evaluation results are presented in Figure 6‐4. The MM5<br />

performance statistics presented in Figure 6‐4 are compared to performance criteria typically<br />

recommended for meteorological model applications for regional air quality studies in <strong>the</strong><br />

United States (Emery et al. 2001) that were presented previously in Table 5‐7. In general, MM5<br />

verification scores indicate a persistent negative bias <strong>and</strong> higher error for both wind speed (‐<br />

1.67 m/s <strong>and</strong> 4.73 m/s, respectively) <strong>and</strong> temperature (‐1.1 °K <strong>and</strong> 2.36 °K, respectively)<br />

averaged across all 168 sites that are outside <strong>of</strong> target performance benchmark values for each<br />

<strong>of</strong> <strong>the</strong>se meteorological parameters. Wind direction bias <strong>and</strong> error were within <strong>the</strong><br />

performance benchmarks. Typically, <strong>the</strong>se performance statistics would likely cause <strong>the</strong><br />

modeler to consider experimenting with additional physics configurations <strong>and</strong>/or altering <strong>the</strong><br />

data assimilation strategy to enhance meteorological model verification statistics. However,<br />

<strong>the</strong> MM5 simulation was not optimized for this project for several reasons:<br />

• First, from an operational perspective, <strong>the</strong> meteorological model errors are likely<br />

consistent with <strong>the</strong> magnitude <strong>of</strong> model prediction errors that would have been<br />

107