The.Algorithm.Design.Manual.Springer-Verlag.1998

The.Algorithm.Design.Manual.Springer-Verlag.1998 The.Algorithm.Design.Manual.Springer-Verlag.1998

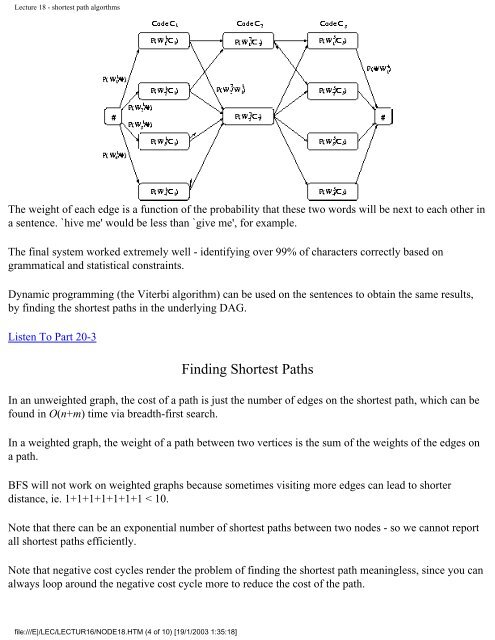

Lecture 18 - shortest path algorthms The weight of each edge is a function of the probability that these two words will be next to each other in a sentence. `hive me' would be less than `give me', for example. The final system worked extremely well - identifying over 99% of characters correctly based on grammatical and statistical constraints. Dynamic programming (the Viterbi algorithm) can be used on the sentences to obtain the same results, by finding the shortest paths in the underlying DAG. Listen To Part 20-3 Finding Shortest Paths In an unweighted graph, the cost of a path is just the number of edges on the shortest path, which can be found in O(n+m) time via breadth-first search. In a weighted graph, the weight of a path between two vertices is the sum of the weights of the edges on a path. BFS will not work on weighted graphs because sometimes visiting more edges can lead to shorter distance, ie. 1+1+1+1+1+1+1 < 10. Note that there can be an exponential number of shortest paths between two nodes - so we cannot report all shortest paths efficiently. Note that negative cost cycles render the problem of finding the shortest path meaningless, since you can always loop around the negative cost cycle more to reduce the cost of the path. file:///E|/LEC/LECTUR16/NODE18.HTM (4 of 10) [19/1/2003 1:35:18]

Lecture 18 - shortest path algorthms Thus in our discussions, we will assume that all edge weights are positive. Other algorithms deal correctly with negative cost edges. Minimum spanning trees are uneffected by negative cost edges. Listen To Part 20-4 Dijkstra's Algorithm We can use Dijkstra's algorithm to find the shortest path between any two vertices and t in G. The principle behind Dijkstra's algorithm is that if is the shortest path from to t, then had better be the shortest path from to x. This suggests a dynamic programming-like strategy, where we store the distance from to all nearby nodes, and use them to find the shortest path to more distant nodes. The shortest path from to , d(,)=0. If all edge weights are positive, the smallest edge incident to , say (,x), defines d(,x). We can use an array to store the length of the shortest path to each node. Initialize each to to start. Soon as we establish the shortest path from to a new node x, we go through each of its incident edges to see if there is a better way from to other nodes thru x. Listen To Part 20-5 for i=1 to n, for each edge (,v), dist[v]=d(,v) last= while ( ) file:///E|/LEC/LECTUR16/NODE18.HTM (5 of 10) [19/1/2003 1:35:18]

- Page 951 and 952: Lecture 12 - introduction to dynami

- Page 953 and 954: Lecture 12 - introduction to dynami

- Page 955 and 956: Lecture 12 - introduction to dynami

- Page 957 and 958: Lecture 13 - dynamic programming ap

- Page 959 and 960: Lecture 13 - dynamic programming ap

- Page 961 and 962: Lecture 13 - dynamic programming ap

- Page 963 and 964: Lecture 14 - data structures for gr

- Page 965 and 966: Lecture 14 - data structures for gr

- Page 967 and 968: Lecture 14 - data structures for gr

- Page 969 and 970: Lecture 14 - data structures for gr

- Page 971 and 972: Lecture 14 - data structures for gr

- Page 973 and 974: Lecture 14 - data structures for gr

- Page 975 and 976: Lecture 15 - DFS and BFS Next: Lect

- Page 977 and 978: Lecture 15 - DFS and BFS In a DFS o

- Page 979 and 980: Lecture 15 - DFS and BFS It could u

- Page 981 and 982: Lecture 15 - DFS and BFS Algorithm

- Page 983 and 984: Lecture 16 - applications of DFS an

- Page 985 and 986: Lecture 16 - applications of DFS an

- Page 987 and 988: Lecture 16 - applications of DFS an

- Page 989 and 990: Lecture 17 - minimum spanning trees

- Page 991 and 992: Lecture 17 - minimum spanning trees

- Page 993 and 994: Lecture 17 - minimum spanning trees

- Page 995 and 996: Lecture 17 - minimum spanning trees

- Page 997 and 998: Lecture 17 - minimum spanning trees

- Page 999 and 1000: Lecture 18 - shortest path algorthm

- Page 1001: Lecture 18 - shortest path algorthm

- Page 1005 and 1006: Lecture 18 - shortest path algorthm

- Page 1007 and 1008: Lecture 18 - shortest path algorthm

- Page 1009 and 1010: Lecture 19 - satisfiability Next: L

- Page 1011 and 1012: Lecture 19 - satisfiability Why? Th

- Page 1013 and 1014: Lecture 19 - satisfiability between

- Page 1015 and 1016: Lecture 19 - satisfiability Note th

- Page 1017 and 1018: Lecture 20 - integer programming Ex

- Page 1019 and 1020: Lecture 20 - integer programming Ne

- Page 1021 and 1022: Lecture 21 - vertex cover Proof: VC

- Page 1023 and 1024: Lecture 21 - vertex cover Question:

- Page 1025 and 1026: Lecture 21 - vertex cover seen to d

- Page 1027 and 1028: Lecture 22 - techniques for proving

- Page 1029 and 1030: Lecture 22 - techniques for proving

- Page 1031 and 1032: Lecture 22 - techniques for proving

- Page 1033 and 1034: Lecture 22 - techniques for proving

- Page 1035 and 1036: Lecture 23 - approximation algorith

- Page 1037 and 1038: Lecture 23 - approximation algorith

- Page 1039 and 1040: Lecture 23 - approximation algorith

- Page 1041 and 1042: Lecture 23 - approximation algorith

- Page 1043 and 1044: Lecture 23 - approximation algorith

- Page 1045 and 1046: About this document ... Up: No Titl

- Page 1047 and 1048: 1.1.6 Kd-Trees ● Point Location

- Page 1049 and 1050: 1.1.3 Suffix Trees and Arrays Send

- Page 1051 and 1052: 1.5.1 Clique ● Vertex Cover Go to

Lecture 18 - shortest path algorthms<br />

<strong>The</strong> weight of each edge is a function of the probability that these two words will be next to each other in<br />

a sentence. `hive me' would be less than `give me', for example.<br />

<strong>The</strong> final system worked extremely well - identifying over 99% of characters correctly based on<br />

grammatical and statistical constraints.<br />

Dynamic programming (the Viterbi algorithm) can be used on the sentences to obtain the same results,<br />

by finding the shortest paths in the underlying DAG.<br />

Listen To Part 20-3<br />

Finding Shortest Paths<br />

In an unweighted graph, the cost of a path is just the number of edges on the shortest path, which can be<br />

found in O(n+m) time via breadth-first search.<br />

In a weighted graph, the weight of a path between two vertices is the sum of the weights of the edges on<br />

a path.<br />

BFS will not work on weighted graphs because sometimes visiting more edges can lead to shorter<br />

distance, ie. 1+1+1+1+1+1+1 < 10.<br />

Note that there can be an exponential number of shortest paths between two nodes - so we cannot report<br />

all shortest paths efficiently.<br />

Note that negative cost cycles render the problem of finding the shortest path meaningless, since you can<br />

always loop around the negative cost cycle more to reduce the cost of the path.<br />

file:///E|/LEC/LECTUR16/NODE18.HTM (4 of 10) [19/1/2003 1:35:18]