74 C. Cheng Table 3 Ten models: Model configuration in terms of (m, m1, σ) and determination of true alternative hypotheses by X1, . . . , X4, X190 and X221, where m1 is the number of true alternative hypotheses; hence π0 = 1 − m1/m. nk = 3 and K = 4 for all models. N(0, σ 2 ) denotes normal random noise Model m m1 σ True HA’s in addition to X1, . . . X4, X190, X221 1 3000 500 3 Xi = X1 + N(0, σ 2 ), i = 5, . . . , 16 Xi = −X1 + N(0, σ 2 ), i = 17, . . . , 25 Xi = X2 + N(0, σ 2 ), i = 26, . . . , 60 Xi = −X2 + N(0, σ 2 ), i = 61, . . . , 70 Xi = X3 + N(0, σ 2 ), i = 71, . . . , 100 Xi = −X3 + N(0, σ 2 ), i = 101, . . . , 110 Xi = X4 + N(0, σ 2 ), i = 111, . . . , 150 Xi = −X4 + N(0, σ 2 ), i = 151, . . . , 189 Xi = X190 + N(0, σ 2 ), i = 191, . . . , 210 Xi = −X190 + N(0, σ 2 ), i = 211, . . . , 220 Xi = X221 + N(0, σ 2 ), i = 222, . . . , 250 Xi = 2Xi−250 + N(0, σ 2 ), i = 251, . . . , 500 2 3000 500 1 the same as Model 1 3 3000 250 3 the same as Model 1 except only the first 250 are true HA’s 4 3000 250 1 the same as Model 3 5 3000 32 3 Xi = X1 + N(0, σ 2 ), i = 5, . . . , 8 Xi = X2 + N(0, σ 2 ), i = 9, . . . , 12 Xi = X3 + N(0, σ 2 ), i = 13, . . . , 16 Xi = X4 + N(0, σ 2 ), i = 17, . . . , 20 Xi = X190 + N(0, σ 2 ), i = 191, . . . , 195 Xi = X221 + N(0, σ 2 ), i = 222, . . . , 226 6 3000 32 1 the same as Model 5 7 3000 6 3 none, except X1, . . . X4, X190, X221 8 3000 6 1 the same as Model 7 9 10000 15 3 Xi = X1 + N(0, σ 2 ), i = 5, 6 Xi = X2 + N(0, σ 2 ), i = 7, 8 Xi = X3 + N(0, σ 2 ), i = 9, 10 Xi = X4 + N(0, σ 2 ), i = 11, 12 X191 = X190 + N(0, σ 2 ) 10 10000 15 1 the same as Model 9 Acknowledgments. I am grateful to Dr. Stan Pounds, two referees, and Professor Javier Rojo for their comments and suggestions that substantially improved this paper. References [1] Abramovich, F., Benjamini, Y., Donoho, D. and Johnstone, I. (2000). Adapting to unknown sparsity by controlling the false discover rate. Technical Report 2000-19, Department of Statistics, Stanford University, Stanford, CA. [2] Allison, D. B., Gadbury, G. L., Heo, M. Fernandez, J. R., Lee, C-K, Prolla, T. A. and Weindruch, R. (2002). A mixture model approach for the analysis of microarray gene expression data. Comput. Statist. Data Anal. 39, 1–20. [3] Benjamini, Y. and Hochberg, Y. (2000). On the adaptive control of the false discovery rate in multiple testing with independent statistics. Journal of Educational and Behavioral Statistics 25, 60–83. [4] Benjamini, Y. and Hochberg, Y. (1995). Controlling the false discovery rate: a practical and powerful approach to multiple testing. J. R. Stat. Soc. Ser. B Stat. Methodol. 57, 289–300.

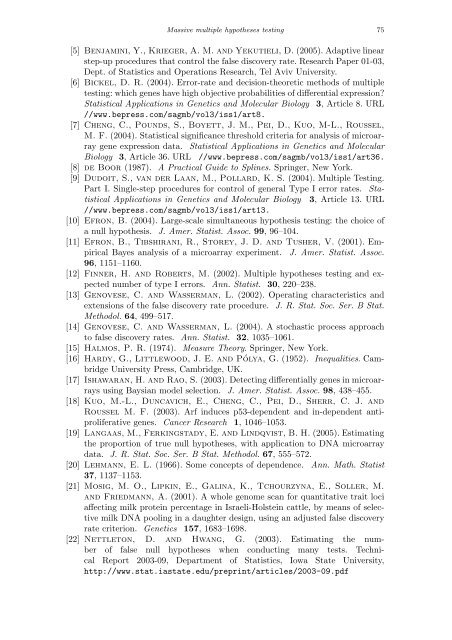

Massive multiple hypotheses testing 75 [5] Benjamini, Y., Krieger, A. M. and Yekutieli, D. (2005). Adaptive linear step-up procedures that control the false discovery rate. Research Paper 01-03, Dept. of Statistics and Operations Research, Tel Aviv University. [6] Bickel, D. R. (2004). Error-rate and decision-theoretic methods of multiple testing: which genes have high objective probabilities of differential expression? Statistical Applications in Genetics and Molecular Biology 3, Article 8. URL //www.bepress.com/sagmb/vol3/iss1/art8. [7] Cheng, C., Pounds, S., Boyett, J. M., Pei, D., Kuo, M-L., Roussel, M. F. (2004). Statistical significance threshold criteria for analysis of microarray gene expression data. Statistical Applications in Genetics and Molecular Biology 3, Article 36. URL //www.bepress.com/sagmb/vol3/iss1/art36. [8] de Boor (1987). A Practical Guide to Splines. Springer, New York. [9] Dudoit, S., van der Laan, M., Pollard, K. S. (2004). Multiple Testing. Part I. Single-step procedures for control of general Type I error rates. Statistical Applications in Genetics and Molecular Biology 3, Article 13. URL //www.bepress.com/sagmb/vol3/iss1/art13. [10] Efron, B. (2004). Large-scale simultaneous hypothesis testing: the choice of a null hypothesis. J. Amer. Statist. Assoc. 99, 96–104. [11] Efron, B., Tibshirani, R., Storey, J. D. and Tusher, V. (2001). Empirical Bayes analysis of a microarray experiment. J. Amer. Statist. Assoc. 96, 1151–1160. [12] Finner, H. and Roberts, M. (2002). Multiple hypotheses testing and expected number of type I errors. Ann. Statist. 30, 220–238. [13] Genovese, C. and Wasserman, L. (2002). Operating characteristics and extensions of the false discovery rate procedure. J. R. Stat. Soc. Ser. B Stat. Methodol. 64, 499–517. [14] Genovese, C. and Wasserman, L. (2004). A stochastic process approach to false discovery rates. Ann. Statist. 32, 1035–1061. [15] Halmos, P. R. (1974). Measure Theory. Springer, New York. [16] Hardy, G., Littlewood, J. E. and Pólya, G. (1952). Inequalities. Cambridge University Press, Cambridge, UK. [17] Ishawaran, H. and Rao, S. (2003). Detecting differentially genes in microarrays using Baysian model selection. J. Amer. Statist. Assoc. 98, 438–455. [18] Kuo, M.-L., Duncavich, E., Cheng, C., Pei, D., Sherr, C. J. and Roussel M. F. (2003). Arf induces p53-dependent and in-dependent antiproliferative genes. Cancer Research 1, 1046–1053. [19] Langaas, M., Ferkingstady, E. and Lindqvist, B. H. (2005). Estimating the proportion of true null hypotheses, with application to DNA microarray data. J. R. Stat. Soc. Ser. B Stat. Methodol. 67, 555–572. [20] Lehmann, E. L. (1966). Some concepts of dependence. Ann. Math. Statist 37, 1137–1153. [21] Mosig, M. O., Lipkin, E., Galina, K., Tchourzyna, E., Soller, M. and Friedmann, A. (2001). A whole genome scan for quantitative trait loci affecting milk protein percentage in Israeli-Holstein cattle, by means of selective milk DNA pooling in a daughter design, using an adjusted false discovery rate criterion. Genetics 157, 1683–1698. [22] Nettleton, D. and Hwang, G. (2003). Estimating the number of false null hypotheses when conducting many tests. Technical Report 2003-09, Department of Statistics, Iowa State University, http://www.stat.iastate.edu/preprint/articles/2003-09.pdf

- Page 1 and 2:

Institute of Mathematical Statistic

- Page 3 and 4:

Institute of Mathematical Statistic

- Page 5 and 6:

iv Contents Copulas and Decoupling

- Page 7 and 8:

vi Finally, thanks go the Statistic

- Page 9 and 10:

SCIENTIFIC PROGRAM The Second Erich

- Page 11 and 12:

x Recent Advances in Longitudinal D

- Page 13 and 14:

The Second Lehmann Symposium—Opti

- Page 15 and 16:

xiv Richard Davis Colorado State Un

- Page 17 and 18:

xvi Matthias Matheas Rice Universit

- Page 19 and 20:

xviii Hui Zhao University of Texas

- Page 21 and 22:

IMS Lecture Notes-Monograph Series

- Page 23 and 24:

Proof. The maximum LR test rejects

- Page 25 and 26:

4. Location-scale families On likel

- Page 27 and 28:

6. Conclusions On likelihood ratio

- Page 29 and 30:

IMS Lecture Notes-Monograph Series

- Page 31 and 32:

Student’s t-test for scale mixtur

- Page 33 and 34:

Student’s t-test for scale mixtur

- Page 35 and 36:

Student’s t-test for scale mixtur

- Page 37 and 38:

Optimality in multiple testing 17 w

- Page 39 and 40:

Optimality in multiple testing 19 I

- Page 41 and 42:

power 0.02 0.03 0.04 0.05 0.06 0.07

- Page 43 and 44: Optimality in multiple testing 23 i

- Page 45 and 46: Optimality in multiple testing 25 (

- Page 47 and 48: Optimality in multiple testing 27 M

- Page 49 and 50: 6. Summary Optimality in multiple t

- Page 51 and 52: Optimality in multiple testing 31 [

- Page 53 and 54: IMS Lecture Notes-Monograph Series

- Page 55 and 56: On the false discovery proportion 3

- Page 57 and 58: On the false discovery proportion 3

- Page 59 and 60: On the false discovery proportion 3

- Page 61 and 62: ≤ N� � N� P m=1 On the fals

- Page 63 and 64: Since j≤ s, it follows that On th

- Page 65 and 66: 0.025 0.02 0.015 0.01 0.005 On the

- Page 67 and 68: On the false discovery proportion 4

- Page 69 and 70: On the false discovery proportion 4

- Page 71 and 72: IMS Lecture Notes-Monograph Series

- Page 73 and 74: Massive multiple hypotheses testing

- Page 75 and 76: Massive multiple hypotheses testing

- Page 77 and 78: Massive multiple hypotheses testing

- Page 79 and 80: Massive multiple hypotheses testing

- Page 81 and 82: P value EDF qdf 0.0 0.2 0.4 0.6 0.8

- Page 83 and 84: Massive multiple hypotheses testing

- Page 85 and 86: Proof. See Appendix. Massive multip

- Page 87 and 88: X1 Massive multiple hypotheses test

- Page 89 and 90: Massive multiple hypotheses testing

- Page 91 and 92: 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4

- Page 93: Then π0α ∗ cal π0α ∗ cal +

- Page 97 and 98: IMS Lecture Notes-Monograph Series

- Page 99 and 100: Frequentist statistics: theory of i

- Page 101 and 102: Frequentist statistics: theory of i

- Page 103 and 104: 2.3. Failure and confirmation Frequ

- Page 105 and 106: 3.1. Types of null hypothesis Frequ

- Page 107 and 108: Frequentist statistics: theory of i

- Page 109 and 110: Frequentist statistics: theory of i

- Page 111 and 112: Frequentist statistics: theory of i

- Page 113 and 114: Frequentist statistics: theory of i

- Page 115 and 116: Frequentist statistics: theory of i

- Page 117 and 118: Frequentist statistics: theory of i

- Page 119 and 120: together with the probabilistic ass

- Page 121 and 122: Statistical models: problem of spec

- Page 123 and 124: Statistical models: problem of spec

- Page 125 and 126: Statistical models: problem of spec

- Page 127 and 128: Statistical models: problem of spec

- Page 129 and 130: Statistical models: problem of spec

- Page 131 and 132: Statistical models: problem of spec

- Page 133 and 134: Statistical models: problem of spec

- Page 135 and 136: Statistical models: problem of spec

- Page 137 and 138: Statistical models: problem of spec

- Page 139 and 140: Statistical models: problem of spec

- Page 141 and 142: Modeling inequality, spread in mult

- Page 143 and 144: Modeling inequality, spread in mult

- Page 145 and 146:

Modeling inequality, spread in mult

- Page 147 and 148:

Modeling inequality, spread in mult

- Page 149 and 150:

Modeling inequality, spread in mult

- Page 151 and 152:

IMS Lecture Notes-Monograph Series

- Page 153 and 154:

Semiparametric transformation model

- Page 155 and 156:

2.1. The model Semiparametric trans

- Page 157 and 158:

Semiparametric transformation model

- Page 159 and 160:

Semiparametric transformation model

- Page 161 and 162:

Semiparametric transformation model

- Page 163 and 164:

Semiparametric transformation model

- Page 165 and 166:

Semiparametric transformation model

- Page 167 and 168:

Semiparametric transformation model

- Page 169 and 170:

Semiparametric transformation model

- Page 171 and 172:

Semiparametric transformation model

- Page 173 and 174:

Semiparametric transformation model

- Page 175 and 176:

Semiparametric transformation model

- Page 177 and 178:

Semiparametric transformation model

- Page 179 and 180:

6.3. Part (ii) Put (6.1) (6.2) ˙Γ

- Page 181 and 182:

Semiparametric transformation model

- Page 183 and 184:

8. Proof of Proposition 2.3 Semipar

- Page 185 and 186:

Semiparametric transformation model

- Page 187 and 188:

Semiparametric transformation model

- Page 189 and 190:

Semiparametric transformation model

- Page 191 and 192:

Bayesian transformation hazard mode

- Page 193 and 194:

Bayesian transformation hazard mode

- Page 195 and 196:

Bayesian transformation hazard mode

- Page 197 and 198:

Cumulative Hazard 0.0 0.2 0.4 0.6 0

- Page 199 and 200:

Hazard function 0.0 0.5 1.0 1.5 2.0

- Page 201 and 202:

Bayesian transformation hazard mode

- Page 203 and 204:

IMS Lecture Notes-Monograph Series

- Page 205 and 206:

Copulas, information, dependence an

- Page 207 and 208:

Copulas, information, dependence an

- Page 209 and 210:

Copulas, information, dependence an

- Page 211 and 212:

Copulas, information, dependence an

- Page 213 and 214:

Copulas, information, dependence an

- Page 215 and 216:

Copulas, information, dependence an

- Page 217 and 218:

Copulas, information, dependence an

- Page 219 and 220:

Copulas, information, dependence an

- Page 221 and 222:

Copulas, information, dependence an

- Page 223 and 224:

Copulas, information, dependence an

- Page 225 and 226:

Copulas, information, dependence an

- Page 227 and 228:

Copulas, information, dependence an

- Page 229 and 230:

Copulas, information, dependence an

- Page 231 and 232:

Regression trees 211 right model in

- Page 233 and 234:

Abs(effects) 0.00 0.05 0.10 0.15 0.

- Page 235 and 236:

Regression trees 215 of the tree. T

- Page 237 and 238:

Regression trees 217 GUIDE methods

- Page 239 and 240:

Regression trees 219 and E appear a

- Page 241 and 242:

Regression trees 221 Table 3 Result

- Page 243 and 244:

Solder =thick 2.5 Regression trees

- Page 245 and 246:

Observed values 0 10 20 30 40 50 60

- Page 247 and 248:

Regression trees 227 effects and in

- Page 249 and 250:

IMS Lecture Notes-Monograph Series

- Page 251 and 252:

On Competing risk and degradation p

- Page 253 and 254:

On Competing risk and degradation p

- Page 255 and 256:

On Competing risk and degradation p

- Page 257 and 258:

On Competing risk and degradation p

- Page 259 and 260:

On Competing risk and degradation p

- Page 261 and 262:

IMS Lecture Notes-Monograph Series

- Page 263 and 264:

Restricted estimation in competing

- Page 265 and 266:

Restricted estimation in competing

- Page 267 and 268:

Restricted estimation in competing

- Page 269 and 270:

Restricted estimation in competing

- Page 271 and 272:

9. Conclusion 0.0 0.1 0.2 0.3 0.4 0

- Page 273 and 274:

IMS Lecture Notes-Monograph Series

- Page 275 and 276:

2.1. Maximin efficiency robust test

- Page 277 and 278:

Robust tests for genetic associatio

- Page 279 and 280:

Robust tests for genetic associatio

- Page 281 and 282:

Robust tests for genetic associatio

- Page 283 and 284:

Robust tests for genetic associatio

- Page 285 and 286:

Robust tests for genetic associatio

- Page 287 and 288:

1 . . . 1 i . . . . . . R j . . . O

- Page 289 and 290:

Optimal sampling strategies 269 as

- Page 291 and 292:

Optimal sampling strategies 271 γ1

- Page 293 and 294:

Optimal sampling strategies 273 Def

- Page 295 and 296:

Optimal sampling strategies 275 Usi

- Page 297 and 298:

Optimal sampling strategies 277 Def

- Page 299 and 300:

optimal 1 2 3 4 leaf sets 5 6 (leaf

- Page 301 and 302:

6. Related work Optimal sampling st

- Page 303 and 304:

Optimal sampling strategies 283 Cla

- Page 305 and 306:

Optimal sampling strategies 285 Sin

- Page 307 and 308:

Optimal sampling strategies 287 µ

- Page 309 and 310:

Optimal sampling strategies 289 on

- Page 311 and 312:

IMS Lecture Notes-Monograph Series

- Page 313 and 314:

Linear prediction after model selec

- Page 315 and 316:

y the P× 1 vector (3.1) ηn(p) = L

- Page 317 and 318:

Linear prediction after model selec

- Page 319 and 320:

Linear prediction after model selec

- Page 321 and 322:

Linear prediction after model selec

- Page 323 and 324:

Linear prediction after model selec

- Page 325 and 326:

Linear prediction after model selec

- Page 327 and 328:

Linear prediction after model selec

- Page 329 and 330:

Appendix B: Proofs for Section 5 Li

- Page 331 and 332:

Linear prediction after model selec

- Page 333 and 334:

Local asymptotic minimax risk/asymm

- Page 335 and 336:

Local asymptotic minimax risk/asymm

- Page 337 and 338:

Then (3.5) Local asymptotic minimax

- Page 339 and 340:

Local asymptotic minimax risk/asymm

- Page 341 and 342:

Local asymptotic minimax risk/asymm

- Page 343 and 344:

Moment-density estimation 323 may n

- Page 345 and 346:

3. The bias and MSE of ˆf ∗ α M

- Page 347 and 348:

Moment-density estimation 327 Corol

- Page 349 and 350:

Moment-density estimation 329 Suppo

- Page 351 and 352:

6. Simulations Moment-density estim

- Page 353 and 354:

Moment-density estimation 333 [7] M

- Page 355 and 356:

for positive α, and for α = 0 as

- Page 357 and 358:

Asymptotics of the MDPDE 337 Lemma

- Page 359:

Asymptotics of the MDPDE 339 asympt